The Foundation of Ethical Intelligence

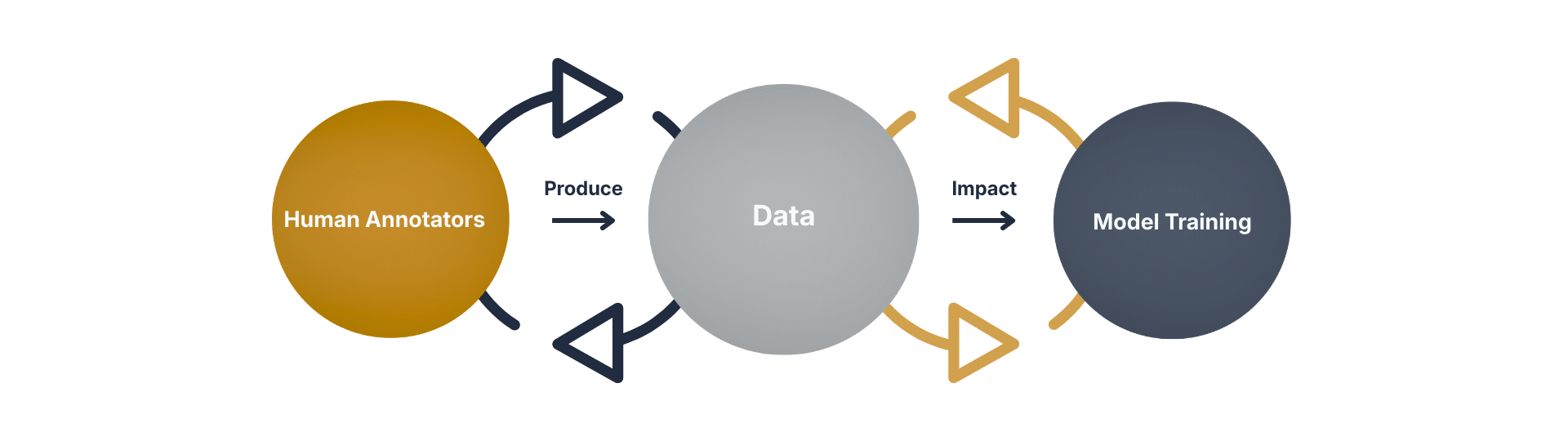

In machine learning, data isn’t just fuel—it’s the blueprint. The accuracy, fairness, and resilience of any model depend on the integrity of its training data. Poorly labeled or biased datasets can lead to flawed predictions, systemic errors, and unintended consequences.

Whether you’re building a recommendation engine or a civic surveillance system, the principle remains: garbage in, garbage out.

Sentinel Watch: Curating Signals That Matter

At Sentinel Watch™, we treat data as infrastructure. Our platform is designed to support adaptive machine learning systems with high-integrity datasets—curated to reflect real-world complexity without compromising ethical standards.

We don’t just collect data. We listen to signals, filter noise, and align inputs with civic impact. Our approach helps:

Listen to signals

We won’t disclose our full methodology here—but know this: quality isn’t an afterthought. It’s the architecture.

Why It Matters

In a world increasingly shaped by AI, the quality of training data determines whether systems serve or surveil, empower or exploit. Sentinel Watch™ is committed to the former.

References

- Adata Pro (2025). “The Importance of High-Quality Training Data for Building Machine Learning Models.” Emphasizes that high-quality data ensures accuracy, reduces bias, and prevents flawed predictions from noise or mislabeling. adata

- V7 Labs (2025). “Training Data Quality: Why It Matters in Machine Learning.” Details how clean, diverse, and ethically sourced data is essential for model performance and fairness. v7labs

- LakeFS (2025). “Why Data Quality Is Key For ML Model Development.” Reinforces the “garbage in, garbage out” principle, stressing integrity in training data for resilient AI systems. lakefs